Introduction

Over the past few years, new AI models have significantly improved their ability to interpret documents directly from images or scanned PDFs. At the same time, specialized document processing services continue to evolve with improved parsing capabilities and pre-trained invoice models.

To better understand the current state of invoice extraction technology, we conducted a benchmark comparing multiple widely used AI systems.

In this benchmark, we evaluate eight AI-powered invoice extraction systems on a dataset of 20 invoices from different years and formats. The goal is to measure how accurately each system extracts structured invoice fields and item-level attributes without any fine-tuning or custom training.

The benchmark includes both specialized document AI services and modern multimodal large language models (LLMs):

- Amazon Analyze Expense API

- Azure AI Document Intelligence – Invoice Prebuilt Model

- Google Document AI – Invoice Parser

- GPT 5.2

- GPT 5 Mini

- Claude Sonnet 4.5

- Gemini 3 Flash

- Gemini 3 Pro

The results provide an updated view of the current capabilities of AI systems for invoice data extraction and offer practical insights for organizations selecting tools for automated document processing.

Benchmark: Best LLM For Invoice Processing in 2025

All systems were evaluated out-of-the-box, without any additional training or customization, to simulate how they would perform in real-world deployments.

Systems Evaluated

This benchmark compares eight different AI systems capable of extracting structured data from invoices. The evaluated solutions include both specialized document AI services designed specifically for forms and invoices, as well as multimodal large language models capable of interpreting document images.

Document AI Services

Amazon Analyze Expense API (AWS)

Amazon’s document processing service designed specifically for invoices and receipts. It extracts key financial fields and item-level information from scanned or digital invoices.

Azure AI Document Intelligence — Invoice Prebuilt Model

Microsoft’s invoice parsing solution that uses a pre-trained model to detect and extract structured invoice fields.

Google Document AI — Invoice Parser

Google’s document understanding system designed to extract structured data from invoices and other financial documents.

Large Language Models

GPT 5.2

A multimodal language model capable of processing document images and extracting structured information through prompting.

GPT 5 Mini

A lightweight variant of GPT designed to offer similar capabilities with lower computational cost.

Claude Sonnet 4.5

Anthropic’s multimodal model that can interpret document layouts and extract structured data from images.

Gemini 3 Flash

A faster and more lightweight Gemini model optimized for speed and efficiency.

Gemini 3 Pro

Google’s flagship multimodal model designed for advanced reasoning and document understanding.

Note: Grok 4.1 Fast Reasoning was also tested on two sample invoices. However, it produced very low accuracy results (23% and 13%), and was therefore excluded from the full benchmark evaluation.

Benchmark Dataset

To ensure a consistent and fair comparison across all evaluated systems, a standardized dataset of 20 invoices was used for the benchmark. The invoices span multiple years and vary in structure, formatting, and number of line items, reflecting the diversity typically encountered in real-world business documents. Older invoices often contain lower-quality scans or less standardized layouts, which can introduce additional challenges for automated extraction systems.

Another important aspect of the dataset is the variation in item counts. Some invoices contain only a single line item, while others include up to 12 individual items, requiring the models to correctly detect and extract structured information from tabular sections.

Invoice samples

|

№ |

Year |

Number of Items |

|---|---|---|

|

1 |

2018 |

4 |

|

2 |

2009 |

1 |

|

3 |

2018 |

3 |

|

4 |

2009 |

1 |

|

5 |

2018 |

12 |

|

6 |

2018 |

2 |

|

7 |

2015 |

3 |

|

8 |

2016 |

2 |

|

9 |

2008 |

3 |

|

10 |

2011 |

2 |

|

11 |

2017 |

2 |

|

12 |

2006 |

4 |

|

13 |

2009 |

2 |

|

14 |

2019 |

3 |

|

15 |

2018 |

2 |

|

16 |

2018 |

1 |

|

17 |

2012 |

3 |

|

18 |

2010 |

4 |

|

19 |

2020 |

3 |

|

20 |

2012 |

3 |

Extracted Fields and Data Normalization

To evaluate the performance of each system consistently, a predefined set of 16 invoice fields was selected for extraction and comparison. These fields represent the core information typically required for automated invoice processing workflows, including document metadata, financial values, party information, and line-item attributes.

Because different AI services use their own naming conventions for extracted fields, all outputs were normalized to a common schema referred to as the Resulting Field format. This allowed the results from all systems to be compared directly.

For document AI services such as AWS, Azure, and Google Document AI, the extracted fields were mapped from their native output names to the unified format. Multimodal models (GPT, Claude, and Gemini) were prompted to return results directly using the same field names.

The following table shows the mapping between the unified field structure and the corresponding fields returned by each document AI system.

|

№ |

Resulting Field |

AWS |

Azure |

|

|---|---|---|---|---|

|

1 |

Invoice Id |

INVOICE_RECEIPT_ID |

InvoiceId |

invoice_id |

|

2 |

Invoice Date |

INVOICE_RECEIPT_DATE |

InvoiceDate |

invoice_date |

|

3 |

Net Amount |

SUBTOTAL |

SubTotal |

net_amount |

|

4 |

Tax Amount |

TAX |

TotalTax |

total_tax_amount |

|

5 |

Total Amount |

TOTAL |

InvoiceTotal |

total_amount |

|

6 |

Due Date |

DUE_DATE |

DueDate |

due_date |

|

7 |

Purchase Order |

PO_NUMBER |

PurchaseOrder |

purchase_order |

|

8 |

Payment Terms |

PAYMENT_TERMS |

PaymentTerm |

payment_terms |

|

9 |

Customer Address |

RECEIVER_ADDRESS |

BillingAddress |

receiver_address |

|

10 |

Customer Name |

RECEIVER_NAME |

CustomerName |

receiver_name |

|

11 |

Vendor Address |

VENDOR_ADDRESS |

VendorAddress |

supplier_address |

|

12 |

Vendor Name |

VENDOR_NAME |

VendorName |

supplier_name |

|

13 |

Item: Description |

ITEM |

Description |

description |

|

14 |

Item: Quantity |

QUANTITY |

Quantity |

quantity |

|

15 |

Item: Unit Price |

UNIT_PRICE |

UnitPrice |

unit_price |

|

16 |

Item: Amount |

PRICE |

Amount |

amount |

Item-level extraction is particularly important for invoice automation, as accounting and procurement systems typically rely on structured line-item data for reconciliation, inventory tracking, and auditing.

Benchmark Results

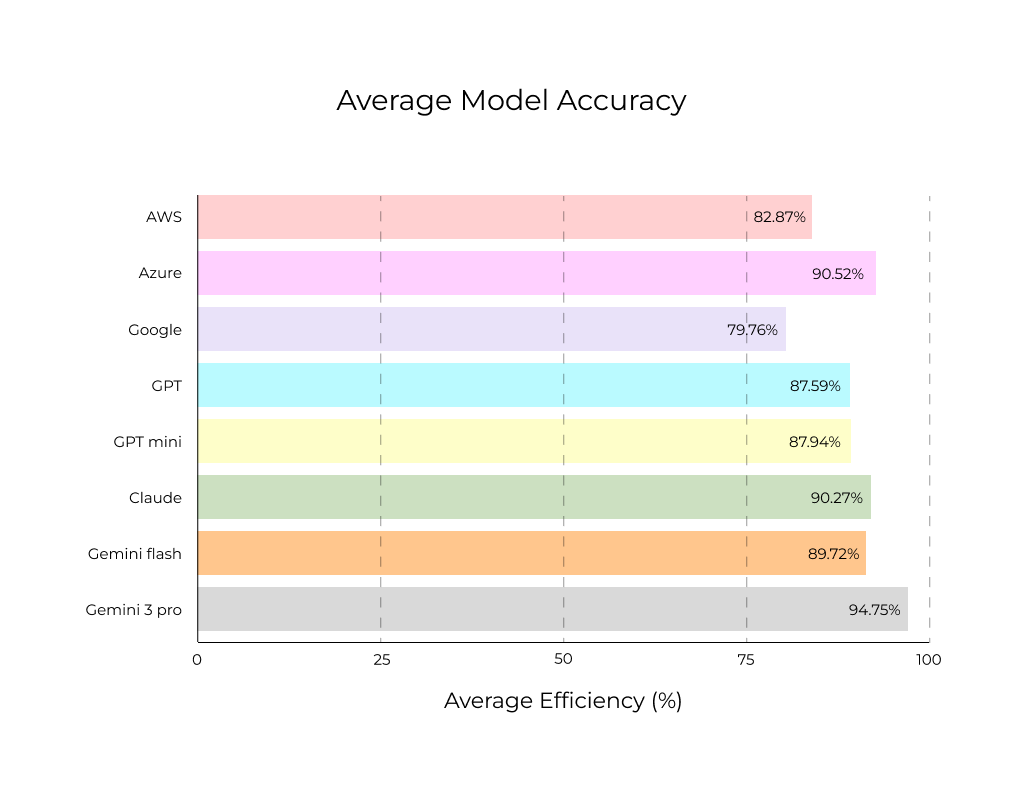

The benchmark compares the overall extraction accuracy of eight AI systems across the dataset of 20 invoices.

Comparison with the Previous Benchmark

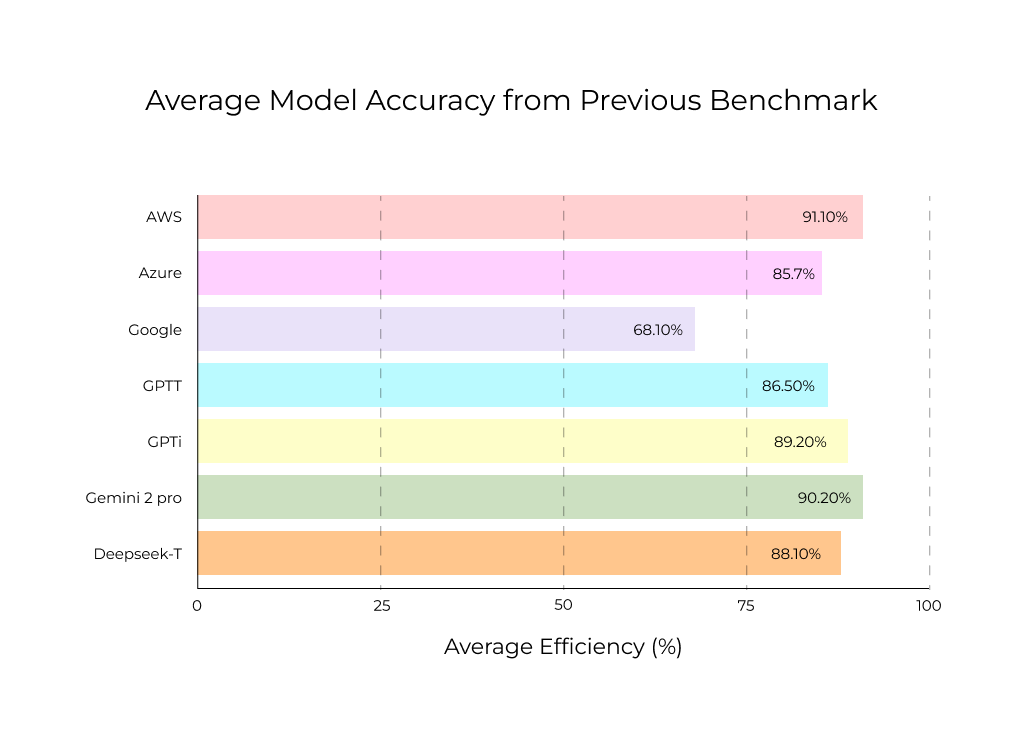

To better understand how invoice extraction performance is evolving, it is useful to compare the current results with those from the previous benchmark conducted in March 2025.

While the exact set of evaluated models differs between the two benchmarks, the comparison highlights several notable shifts in performance across platforms:

- Most notably, the new benchmark introduces Gemini 3 Pro, which achieves 94.75% accuracy, exceeding the performance of all models tested in the previous evaluation.

- At the same time, some traditional document AI systems show significant changes in performance between the two benchmarks. Azure AI Document Intelligence improves considerably, while AWS Analyze Expense shows a noticeable decrease in accuracy compared to the earlier results.

These differences illustrate how rapidly the capabilities of document processing systems are evolving, particularly with the emergence of large language models capable of interpreting documents directly from images.

Comparing AI Invoice Processing in 2025 and 2026

Comparing the results of the current benchmark with those from the previous evaluation reveals several notable shifts in model performance and overall trends in invoice extraction capabilities.

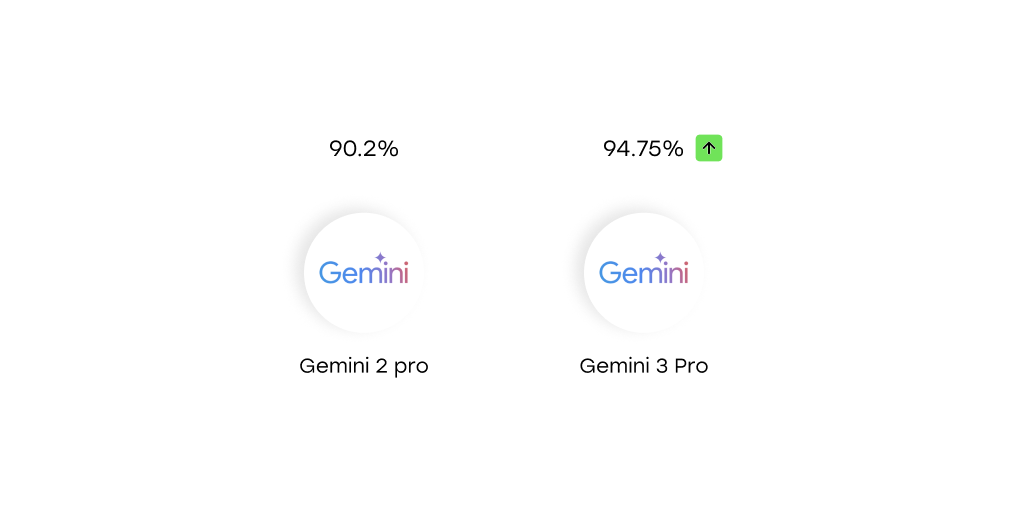

New Performance Leader: Gemini 3 Pro

The most significant change in the current benchmark is the emergence of Gemini 3 Pro as the top-performing system. The model achieved an average extraction accuracy of 94.75%, the highest among all evaluated solutions.

In the previous benchmark, Gemini 2 Pro reached 90.2% accuracy, already placing it among the top-performing models. The new results represent a substantial improvement and establish Gemini 3 Pro as the clear leader in this evaluation, outperforming the next-best systems by several percentage points.

This result highlights the rapid progress in multimodal large language models and their ability to interpret structured business documents such as invoices.

Strong Improvement in Azure Performance

Strong Improvement in Azure Performance

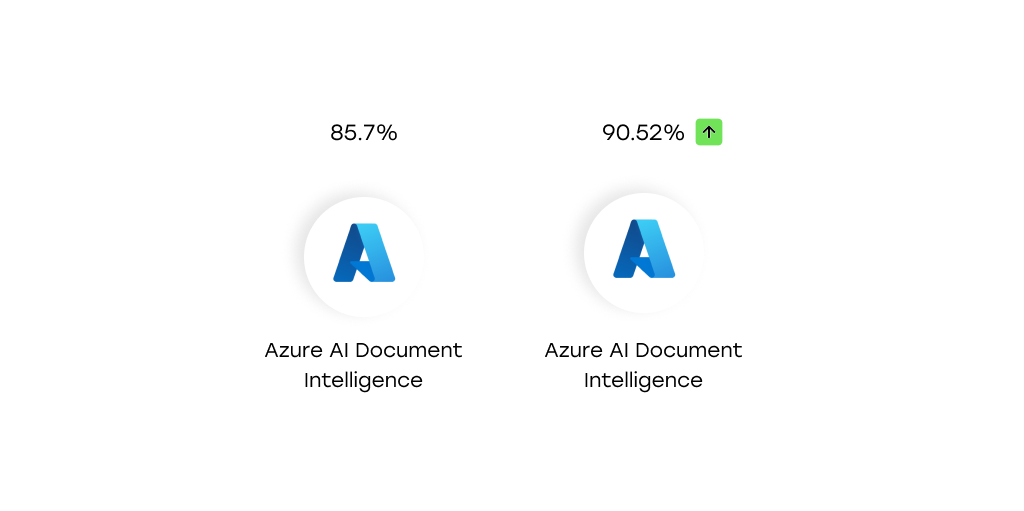

Another major shift in the benchmark results is the improvement observed in Azure AI Document Intelligence.

In the previous benchmark, Azure achieved 85.7% accuracy, placing it in the middle of the evaluated systems. In the current benchmark, its performance increased to 90.52%, representing an improvement of approximately five percentage points.

This increase moves Azure into the top-performing group, placing it close to models such as Claude Sonnet 4.5 and Gemini 3 Flash.

Performance Decline in AWS

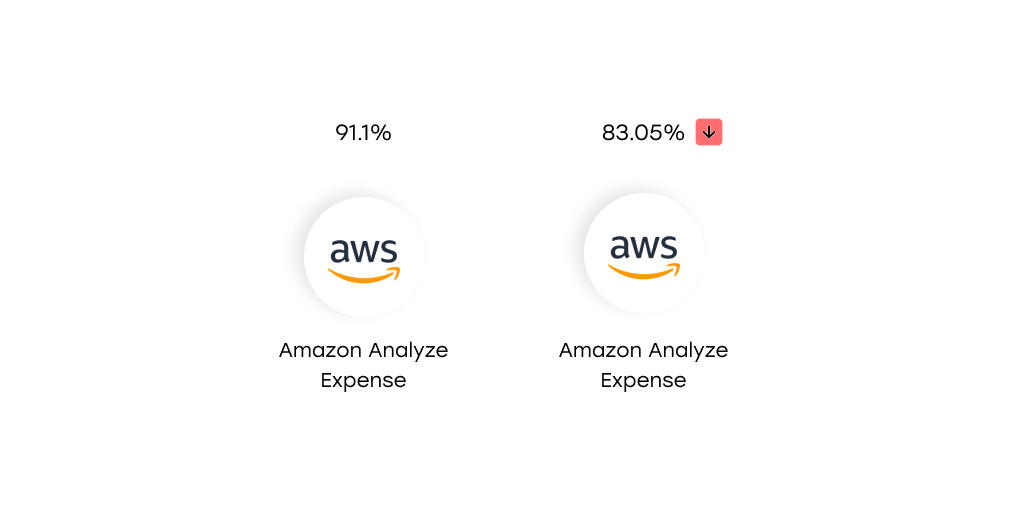

While several systems improved, Amazon Analyze Expense showed a noticeable decline in performance.

In the previous benchmark, AWS achieved 91.1% accuracy, placing it among the leading solutions. In the current benchmark, its accuracy decreased to 83.05%, representing a drop of roughly eight percentage points.

This is the largest negative shift observed among the evaluated systems and moves AWS from the top tier of performance into a lower position within the current benchmark.

Improvement in Google Document AI

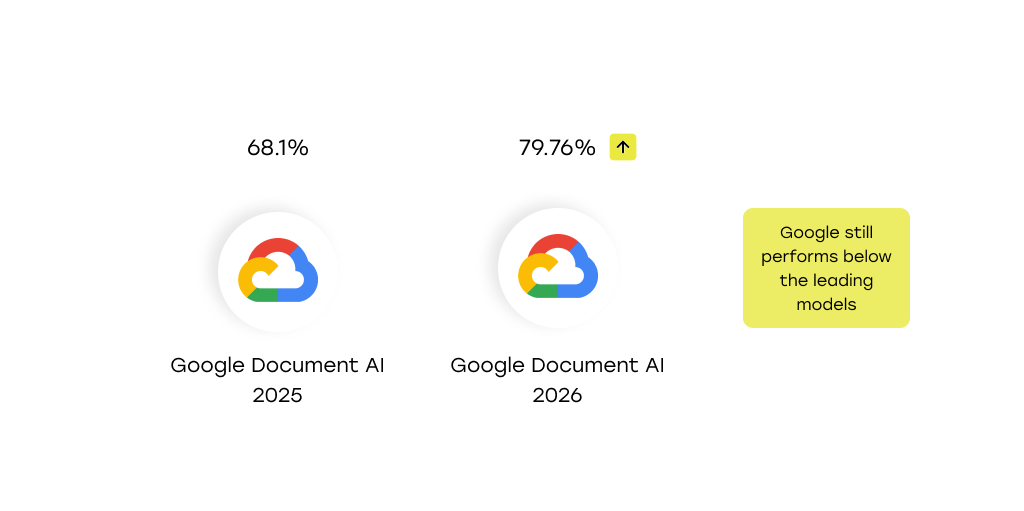

Google Document AI also shows measurable improvement compared to the previous evaluation.

In the earlier benchmark, Google achieved 68.1% accuracy. In the current benchmark, its performance increased to 79.76%, representing an improvement of approximately 12 percentage points.

A key factor behind this improvement is the way item data is returned. In the previous benchmark, Google provided item information as full item rows rather than structured attributes. In the current benchmark, item attributes are available as separate structured fields, making comparison and evaluation more consistent with other systems.

Note that Google still performs below the leading models in the benchmark.

Increasing Separation Between Multimodal LLMs and Classical OCR Pipelines

The results of the current benchmark show a clearer distinction between multimodal LLM-based systems and traditional document AI services.

Models like Claude Sonnet 4.5, Gemini 3 Flash, Gemini 3 Pro, and the GPT variants generally achieve higher accuracy levels than classical OCR-based pipelines such as AWS, Azure, and Google Document AI.

While traditional document AI systems remain competitive, particularly in the case of Azure, multimodal models demonstrate a stronger ability to interpret document layouts and extract structured information.

Comparable Performance Between Large and Lightweight LLM Variants

Another interesting observation from the benchmark is the relatively small performance difference between large and lightweight language models.

For example, GPT 5.2 and GPT 5 Mini achieved very similar accuracy results, both around 88%. This suggests that invoice extraction tasks rely more on structured document interpretation than on advanced reasoning capabilities.

This suggests that invoice extraction may rely more on structured document interpretation than on advanced reasoning capabilities, reducing the performance benefit of larger model sizes for this task.

Conclusion: AI Invoice Processing in 2026

This benchmark highlights several clear developments in AI-powered invoice extraction.

- Gemini 3 Pro sets a new benchmark for accuracy: with 94.75% extraction accuracy, Gemini 3 Pro achieved the best performance among all tested systems, establishing a new top result for this benchmark series.

- Multiple systems now exceed 90% accuracy: Azure AI Document Intelligence and Claude Sonnet 4.5 both achieved results above 90%, showing that several solutions are now capable of high-quality invoice extraction without custom training.

- Multimodal LLMs continue to strengthen their position: Models such as Gemini, Claude, and GPT demonstrate strong capabilities in interpreting document layouts and extracting structured data directly from invoice images.

- Lightweight models can remain competitive: The small gap between GPT 5.2 and GPT 5 Mini suggests that invoice extraction relies more on structured document interpretation than on complex reasoning, allowing smaller models to perform competitively.

Overall, the results show that AI-driven invoice processing is becoming increasingly reliable, with multiple systems now capable of delivering high extraction accuracy for real-world business documents.