No-Code Machine Learning Platform

First Step AI is a startup looking to bring machine learning to the masses. The main goal of this project is to create a system that would be simple for the average user to train their own image recognition model, but complex enough to fit the needs of software developers and machine learning specialists. We have developed a machine learning model training platform that allows users to create, train and test their own ML models. The platform consists of several modules which reflect the user's journey.

AI Validation Service

MVP Development

2 Data Scientists

UX Designer

Project Manager

At-home ML Users

Collection Of Projects

All models trained using the platform exist in the form of projects - a collection of all relevant data, allowing for a quick overview of all user projects and their status.

.png)

Dataset Management

The platform allows users to add and annotate their own datasets by uploading images and marking them up. Before the markup starts, users can create labels that represent the objects which need to be detected to use for a markup later.

.png)

After the images are ready, the platform performs dataset analysis and provides relevant statistical information, like total image count, along with a balancing score that shows how good the dataset is in terms of objects distribution by classes. This makes it possible for the user to create a well-balanced data search for efficient machine learning model training. The more balanced the dataset is, the higher the mAP (mean average precision).

.png)

Image Recognition Model Training

After the dataset is complete, users can start training their own machine learning model. They can choose Tensorflow or PyTorch with various sets of base models and input sizes. For model training, we have used Amazon Sagemaker which allows for fast training and significant savings for the client as they only pay for effective training time. During the training process users are able to get real-time information on how their model is improving its detection accuracy.

After the model has been trained, users can check on the final mAp and decide on whether it is high enough or if they want to improve it. When the latter is in case, users can go back to the dataset and add more images or increase its balancing score.

Model Testing And Download

If the users are happy with the mAp, they can test their models within our platform by uploading images and videos not included in the training dataset and seeing how well the model is able to detect the objects. Once the testing is complete, users can download the model in several formats depending on how it will be used:

- APK - a ready-made Android mobile app with a user-trained model built-in

- APK source code - ideal for mobile developers and integration into mobile apps

- Various formats to install into different environments (servers, mobile phones, IoT or other edge devices) - TFLite (Quantized/Non-Quantized, Int8/Float16/Float32, Saved Model (.pb)) or YOLOv5 (.pt)

.png)

3D Model Generation

An additional feature, 3D model generation allows users to upload photos of any object and get a detailed 3D model, which can then be used for 3D printing.

.png)

ChatGPT Assistant

We have created a ChatGPT-based chat assistant to help users train models quickly and easily. Users type their project requirements, and the assistant generates instructions on how to use the system most efficiently.

Forest Fire Detection Neural Network Trained Using First Step AI

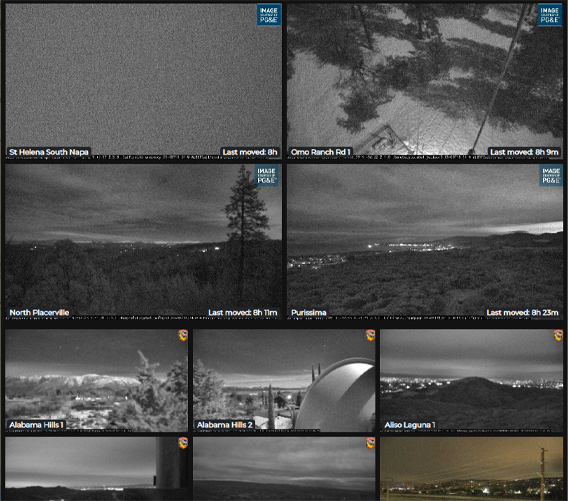

First Step AI allows to train robust neural networks capable of detecting complex events and easily create apps, both mobile and web, using the networks. We have trained a neural network to detect first fires by pulling images from webcams from around the world, analyzing them to detect signs of a forest fire, like smoke or flames. If the network has detected these signs, it sends alerts through a web interface, where a user can look at all of the forest fires detected and take a look at video feeds from the cameras.

The user can control the web camera through the system's interface by zooming in or out to get a better look at the forest fire. We have integrated a meteorological service which allows users to see a wind map and predict the direction in which the fire will spread.

Architecture And Tech Stack

The platform takes advantage of various Amazon services both for model training itself and the platform's own architecture. We have used AWS Amplify to implement serverless architecture which allows for fast development times as it eliminates the need for DevOps, saving client time and resources. Amazon Sagemaker is used for model training as it simplifies the need for dedicated environment orchestration.

We have implemented a second-tier backend using Python to accommodate Sagemaker and any features the growing startup might need in the future.

Results

The platform is a simple to use and elegant solution to a high barrier of entry into machine learning development. While it is simple enough for the average user, it is also an invaluable tool for software development teams that need to implement machine learning into their projects but are not ready to hire machine learning developers.

The platform is fully operational and is now in the soft launch stage. We continue system support and plan on introducing improvements and new features in the near future.

Success Stories

Contact Us

Let's Work Together!

Do you want to know the total cost of development and realization of the project? Tell us about your requirements, our specialists will contact you as soon as possible.